Split Testing Prompts That Help You Find Winning Creatives Faster

Split Testing Prompts

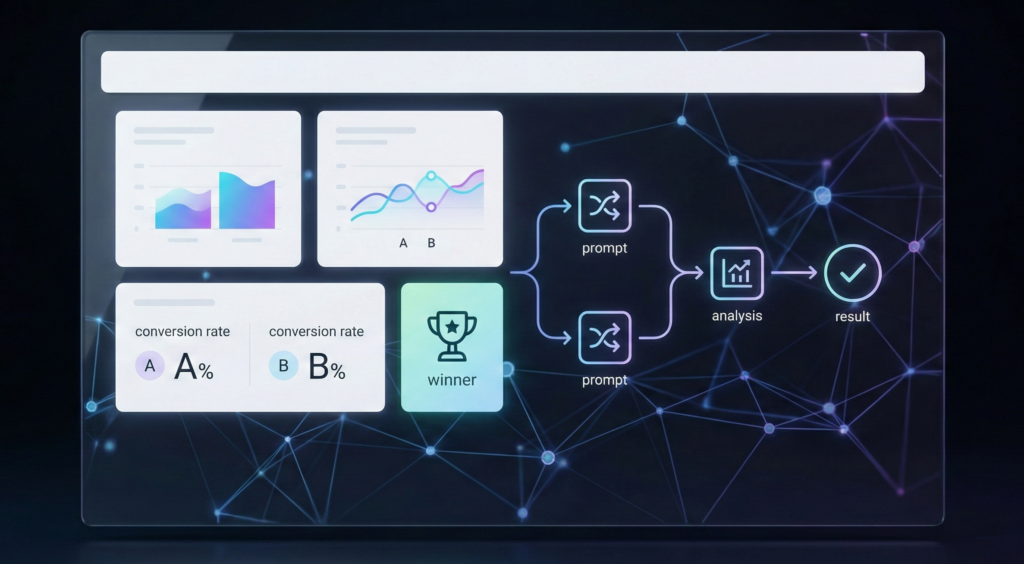

If you have ever felt stuck guessing which creative will work, split testing prompts can feel like a breath of fresh air. Instead of relying on instinct or copying what others are doing, you start making decisions based on real responses. This shift alone can save hours of work and reduce frustration. When you split test prompts, you are not just testing ideas. You are testing how people think, react, and engage.

Table of Contents

Many creators focus heavily on visuals, hooks, or captions, but the prompt behind the creative often decides the final output. A small wording change can lead to a completely different result. That is why prompt split testing is so powerful. It helps you understand which instructions generate clarity, emotion, and relevance. Over time, this turns creative work into a repeatable process rather than a guessing game.

Another reason split testing prompts matters is speed. Instead of creating ten different creatives from scratch, you can generate variations quickly by adjusting prompts. This allows you to compare outputs side by side and spot patterns faster. You begin to notice which phrases trigger better storytelling, stronger calls to action, or more engaging visuals.

Split testing also removes emotional attachment from the process. When you test prompts, you stop defending ideas just because you like them. The output speaks for itself. This mindset is especially helpful when you are working with clients, brands, or campaigns where results matter more than personal preference.

Here are a few reasons creators rely on split testing prompts:

- It reduces creative burnout by narrowing down what works

- It creates consistency across campaigns

- It reveals hidden patterns in audience preferences

- It speeds up content production

- It improves ROI by focusing on proven directions

At its core, split testing prompts is about control. You control the variables instead of letting randomness dictate results. Once you understand this, creating winning creatives becomes faster and more predictable.

Helpful resources: If you want a clean primer on A/B testing basics (and how to think about variables), this guide is a solid reference: Optimizely’s A/B testing overview. For platform-level experimentation concepts, this is also useful: Meta guidance on testing/experiments.

Also, if you’re pairing prompt tests with creative refresh cycles, you’ll probably want to read these related posts on PerformancePrompts:

How to Structure Split Testing Prompts for Clear Results

Split testing only works when your structure is intentional. Randomly changing words without a plan leads to confusing results. The goal is to isolate one variable at a time so you can clearly see what made the difference. This is where most people go wrong. They change too much at once and end up unsure why one creative performed better.

Start by defining what you are testing. This could be tone, format, audience angle, or storytelling style. Once you choose one variable, everything else stays the same. This creates a clean comparison and makes insights easier to spot.

A simple way to structure split testing prompts is to keep a base prompt and modify only one line per version. For example, the base instruction stays the same, but the emotional angle changes. One version might focus on curiosity, while another leans into urgency. The rest of the prompt remains untouched.

Common prompt elements you can split test include:

- Tone, such as casual versus authoritative

- Perspective, such as first person versus second person

- Length, such as short punchy output versus detailed explanations

- Emotional trigger, such as fear, excitement, or relief

- Format, such as list-based versus narrative

Consistency is critical. Use the same platform, same creative goal, and same evaluation criteria. This way, your comparison remains fair and useful.

Practical Split Testing Prompt Frameworks You Can Reuse

Once you understand the basics, the next step is using frameworks you can repeat. Reusable frameworks save time and reduce decision fatigue. They also help teams stay aligned when multiple people are generating creatives.

One effective framework is the single-variable swap. You create one base prompt and swap out only one line each time. This is ideal for beginners and produces clean data.

Another framework is the audience angle test. In this approach, the prompt remains the same except for who it speaks to. One version might target beginners, another speaks to experienced users. This helps you understand which audience responds more strongly.

A third framework focuses on outcome framing. You test whether people respond better to results-based messaging or process-based messaging. Both can work, but one often outperforms the other depending on the context.

Here are three reusable prompt frameworks you can apply immediately:

Framework 1: Single Variable Swap

- Keep the main instruction the same

- Change only one descriptor such as tone or emotion

- Compare outputs side by side

Framework 2: Audience Angle Test

- Version A speaks to beginners

- Version B speaks to experienced users

- Measure relatability and clarity

Framework 3: Outcome Versus Process

- Version A highlights final results

- Version B explains the journey

- Measure trust and engagement

When using these frameworks, document your results. Even simple notes can reveal trends over time. You might discover that your audience consistently prefers direct language or shorter explanations. These insights become creative shortcuts in future campaigns.

Split testing prompts is not about finding a single perfect prompt. It is about building a library of proven directions. Over time, this library becomes one of your most valuable creative assets.

Turning Split Test Results Into Faster Creative Wins

The real value of split testing prompts comes after the test. Many people stop once they pick a winner, but the deeper insight lies in understanding why it won. This reflection helps you apply the lesson to future projects without starting from zero.

Start by reviewing winning prompts and identifying patterns. Look for repeated elements such as tone, sentence length, or emotional triggers. These patterns become guidelines for future creatives. You no longer need to guess because you have evidence.

Another important step is iteration. A winning prompt is not the end. It becomes the new base for the next test. By stacking small improvements, you gradually refine your creatives until they feel effortless and effective.

To turn results into faster wins, follow this simple process:

- Identify the winning prompt

- Break down what made it effective

- Apply those elements to new prompts

- Test again with a new variable

- Repeat the cycle

Speed improves naturally with practice. As your intuition aligns with data, you will make better decisions faster. You will also waste less time on ideas that do not resonate.

Split testing prompts also improves collaboration. When working with teams or clients, you can show why a creative direction was chosen. This builds trust and reduces back-and-forth revisions. Decisions feel grounded instead of subjective.

In the long run, prompt split testing changes how you think about creativity. You stop chasing trends and start building systems. Winning creatives become less about luck and more about process. When you reach this stage, creating high-performing content feels lighter, faster, and far more sustainable.

By committing to split testing prompts consistently, you give yourself a competitive edge. You move faster, learn quicker, and create with confidence. Over time, that advantage compounds, and finding winning creatives becomes second nature rather than a struggle.